Most enterprise proposal teams are running a manual process with an AI tool bolted on top. They'll use a chatbot to draft a few answers, copy them into Word, and call it automation. That's not AI proposal management. That's autocomplete with extra steps.

Real AI proposal management transforms the entire operation — from the moment an RFP arrives in someone's inbox to the moment the signed contract enters your CRM. It's a system, not a feature. And the teams that implement it as a system are the ones seeing 60-80% reductions in response cycle time while actually improving win rates.

This playbook maps every stage of enterprise proposal management, shows where AI changes the economics at each stage, and gives you the decision criteria and KPIs to measure whether it's working. If your team handles more than 20 RFPs per quarter and you haven't systematized the process end-to-end, you're leaving deals on the table.

Getting StartedWhat Is Enterprise Proposal Management and When Is Your Team Ready for AI?

Enterprise proposal management is the discipline of systematically handling the full lifecycle of proposals — from intake triage through content assembly, compliance review, submission, and outcome tracking — at a scale where ad-hoc processes break down.

It's different from using proposal software. Plenty of teams have a tool but no system. They react to each RFP as a one-off event, scramble to assemble content, and learn nothing from the outcome. Enterprise proposal management turns that reactive cycle into a repeatable operation with defined stages, clear ownership, measurable KPIs, and continuous improvement.

Your team is ready for AI-powered proposal management when:

- You respond to 20+ RFPs, DDQs, or security questionnaires per quarter

- More than 3 people are involved in a typical proposal response

- You're rewriting answers to questions you've answered before — across proposals, across quarters

- Your win rate is inconsistent and you can't explain why some proposals win and others don't

- Your best proposal writers are bottlenecks because everything routes through them

- You've lost deals because you couldn't respond fast enough, even when you had the right answers

If three or more of those describe your team, you're past the point where better templates or more diligent project management will fix the problem. You need a system that scales.

Stage 1RFP Intake Triage and Go/No-Go Qualification

Every proposal operation starts with the same question: should we respond to this? The teams that answer this question systematically win more deals. The teams that answer it emotionally — "the CEO knows someone" or "we can't afford to say no" — waste cycles on unwinnable opportunities.

The Triage Problem at Scale

At 20+ RFPs per quarter, manual triage breaks down. Someone in sales forwards an RFP with "can we do this?" attached. The proposal manager opens it, skims the requirements, asks three people for their opinion, and makes a gut call. That process takes 2-4 hours and produces inconsistent results.

How AI Changes Intake Triage

AI-powered triage automates the first pass:

1. Requirement extraction — The system parses the incoming RFP document and identifies key requirements: compliance certifications needed, technical capabilities requested, timeline constraints, geographic restrictions, and budget signals.

2. Fit scoring — Requirements are matched against your company's current capabilities, certifications, and past proposal history. The system produces a fit score that accounts for how well your existing knowledge base covers the questions being asked — not just whether you theoretically could do the work, but whether you have the approved content to prove it.

3. Competitive signal detection — Procurement language, incumbent references, and specification patterns can signal whether an RFP is genuinely competitive or wired for another vendor. AI systems trained on your win/loss history can flag these patterns.

4. Resource estimation — Based on RFP complexity, question volume, and the percentage of questions coverable by existing content, the system estimates the human effort required to complete the response.

The Go/No-Go Framework

Use the AI triage output to make structured decisions:

- Green (auto-qualify): High fit score, 70%+ existing content coverage, timeline feasible, no disqualifying requirements. Start immediately.

- Yellow (committee review): Moderate fit score, gaps in specific areas, or competitive signals present. Review with sales leadership — 15 minutes, not 2 hours.

- Red (decline or deprioritize): Low fit score, major capability gaps, or clear incumbent advantage. Decline professionally with a template response that keeps the door open.

KPI: Track your bid-to-win ratio before and after implementing structured triage. Teams that move from gut-feel to data-driven triage typically see bid-to-win improvements of 15-25% — not because they write better proposals, but because they stop wasting cycles on the wrong ones.

Stage 2Content Library Governance and Knowledge Management

Your content library is the engine that powers AI proposal management. If the engine runs on outdated fuel — stale SOC reports, last year's product descriptions, deprecated compliance language — the AI will generate confidently wrong answers. Garbage in, garbage out applies doubly when AI is generating at speed.

The Knowledge Management Challenge

Most enterprise teams have content scattered across SharePoint, Google Drive, Confluence, email threads, and individual team members' local drives. The "knowledge base" is actually 15 different repositories maintained by 15 different people with 15 different update cadences.

AI proposal management forces discipline on this problem because the system only works as well as its sources.

Building an Effective Content Library

Source classification. Organize content into distinct categories with different governance rules:

- Compliance and regulatory — SOC reports, certifications, regulatory filings. Updated on certification cycles. Requires compliance team sign-off before AI can use updated versions.

- Product and technical — Feature descriptions, architecture documentation, integration specifications. Updated with product releases. Requires product team sign-off.

- Case studies and proof points — Customer outcomes, metrics, deployment timelines. Updated quarterly. Requires customer success or marketing sign-off.

- Boilerplate — Company overview, leadership bios, office locations. Updated annually or on change. Lower governance burden.

Version control. Every document in the knowledge base should be versioned, with the AI always pulling from the most recently approved version. When your team uploads a new SOC 2 Type II report, the old one moves to archive — the AI stops referencing it immediately.

Freshness alerts. Set expiration triggers on time-sensitive content. If your SOC 2 report is 10 months old and renewal is at 12 months, your team should know before the AI starts generating answers with an about-to-expire certification reference.

Access controls. Not all content should be available for all proposals. Restrict NDA-protected customer references, internal pricing, and draft materials from the AI's retrieval scope for external proposals.

KPI: Measure content coverage rate — what percentage of questions in your typical RFP can the AI answer from existing content at an acceptable confidence level? Most teams start at 40-50% and reach 75-85% within two quarters of disciplined knowledge management.

Stage 3AI-Assisted Drafting and SME Review Workflows

This is where most teams think AI proposal management begins and ends — the drafting step. It's important, but it's one stage in a five-stage system. Teams that nail the drafting workflow but neglect intake, governance, compliance, and outcome tracking get faster at producing mediocre proposals.

How AI Drafting Actually Works in Enterprise Environments

Enterprise AI proposal drafting is not chatbot-style text generation. It's retrieval-augmented generation (RAG) scoped to your approved content library:

1. Question parsing — The system reads each RFP question and classifies it by category: compliance, technical, commercial, operational, case study.

2. Content retrieval — For each question, the AI searches your knowledge base for the most relevant approved content — prior approved answers to similar questions, policy documents, product documentation, and customer evidence.

3. Draft generation — Using the retrieved content as source material, the AI generates a draft answer that addresses the specific question while staying grounded in your approved language. The draft includes confidence scores and source citations.

4. Gap identification — Questions where the AI can't find sufficient source material are flagged immediately — not answered with generic text. These gaps become the SME assignment queue.

Structuring SME Review

The worst thing you can do with AI drafting is route every answer to the same reviewer. That recreates the bottleneck you were trying to eliminate.

Category-based routing:

- Compliance and regulatory questions → compliance team or legal

- Technical architecture questions → engineering or solutions team

- Pricing and commercial questions → finance or deal desk

- Customer reference questions → customer success

Confidence-based routing:

- High-confidence answers (above your threshold) → bulk review by proposal manager

- Medium-confidence answers → specialist review with source context

- Low-confidence/no-match answers → manual authoring by SME with AI-suggested starting points

Time-boxed review cycles. Set SLAs for SME review — 24 hours for standard questions, 4 hours for compliance-critical items when deadlines are tight. The AI platform should track these SLAs and escalate when reviews are overdue.

KPI: Track first-draft acceptance rate — the percentage of AI-generated answers that reviewers approve without modification. This is your clearest signal of knowledge base quality and AI effectiveness. Start by measuring it; expect 50-60% in month one and 70-80% after two quarters of active use.

See how Tribble powers enterprise

proposal operations

From intake triage to outcome learning. One platform for the entire proposal lifecycle.

Book a Demo.

Compliance Checks and Quality Assurance

AI drafts a proposal in hours instead of days. But if your quality assurance process takes the same three days it always did, you've just shifted the bottleneck. Compliance checks and QA need to scale with the drafting speed.

Automated Compliance Checks

AI platforms can automate the mechanical parts of compliance review:

- Consistency verification. Does the answer about your data residency in question 47 match the answer about data residency in question 183? Inconsistency across a 500-question RFP is one of the most common compliance failures — and one of the hardest to catch manually.

- Certification currency. Are the SOC reports, ISO certifications, and regulatory filings referenced in the proposal still current? Flag any answer that cites an expired or soon-to-expire document.

- Prohibited language detection. Financial services, healthcare, and government RFPs often have specific language requirements. AI can flag responses that use prohibited terms, make unsubstantiated claims, or include language that hasn't been approved by legal.

- Completeness checks. Were all required questions answered? Are mandatory attachments referenced? Do response length requirements meet the RFP specifications?

Human QA Layer

Automated checks catch mechanical errors. Human QA catches strategic ones:

- Does the overall narrative tell a coherent story about why we're the right partner?

- Are the proof points specific enough to be credible, or do they read like generic marketing?

- Does the executive summary address the prospect's stated priorities — not our standard pitch?

- Are competitor-sensitive sections positioned effectively?

KPI: Track compliance error rate — the number of errors caught in final QA divided by total proposal questions. If this number isn't trending downward over time, your knowledge base governance (Stage 2) needs attention.

Stage 5Deal-Close Handoffs and Outcome Tracking

The proposal goes out the door. What happens next determines whether your operation gets smarter or just gets faster.

The Handoff Gap

Most proposal teams lose visibility the moment the proposal is submitted. Sales owns the deal from that point. The proposal team doesn't know if they won, lost, or are in negotiations — and if they lose, they rarely learn why.

This gap is where AI proposal management creates its biggest long-term advantage: the outcome learning loop.

Building the Outcome Loop

Win/loss capture. Every proposal needs a recorded outcome: won, lost, no decision, or withdrawn. For losses, capture the reason — price, capability gap, timing, incumbent advantage, or compliance concern.

Answer-level feedback. When possible, capture prospect feedback at the question level. Which sections of the proposal were strong? Which raised concerns? During oral presentations, which proposal sections generated the most questions? This granular feedback feeds the AI's learning engine.

Content performance analytics. Over time, your system should show you:

- Which answer patterns correlate with winning proposals

- Which question categories have the highest reviewer override rates — indicating knowledge gaps

- Which content sources produce the most accepted first drafts

- How first-draft accuracy is trending across proposal cycles

CRM synchronization. Proposal outcomes should flow back to your CRM automatically. Pipeline reports should reflect proposal submission status, and deal velocity metrics should include time-to-propose as a measured stage.

Closing the Loop

When a reviewer edits an AI-generated answer and that proposal wins, the edited version becomes the new preferred answer. When a proposal loses because of a weak compliance section, that feedback triggers a knowledge base review. When a new customer reference becomes available, it enters the content library immediately.

This is outcome learning — and it's the difference between an AI tool that produces the same quality in month 12 as month 1 and a system that compounds accuracy with every completed cycle.

KPI: Track proposal cycle time (intake to submission), win rate, and first-draft accuracy as a cohort — each quarter should show improvement across all three as the outcome loop compounds.

Get StartedBuild Your AI-Powered Proposal Operation with Tribble

Tribble isn't a drafting tool bolted onto your existing process. It's the platform that operationalizes every stage in this playbook — from AI-powered intake triage to content retrieval and drafting to outcome analytics that make your team smarter with every completed proposal.

Teams running Tribble at scale see the compounding effect: faster cycles, higher first-draft accuracy, and win rates that improve as the system learns what "good" looks like for their specific market. Our Customer Success team works with enterprise proposal teams to configure the full workflow — typically operational within the first week.

Already using proposal software? See how AI agents work differently from legacy RFP tools, or learn how personalization at scale keeps proposals specific without slowing your team down.

Frequently Asked QuestionsFrequently Asked Questions About Enterprise AI Proposal Management

An AI proposal writer uses retrieval-augmented generation to match incoming RFP questions against your organization's approved content library — prior approved answers, compliance documentation, product specifications, and customer evidence. Unlike generic AI writing tools that generate text from training data, enterprise AI proposal writers are grounded in your specific materials and assign confidence scores to every response. Questions that fall below your confidence threshold are flagged for human expert review rather than answered with generated text.

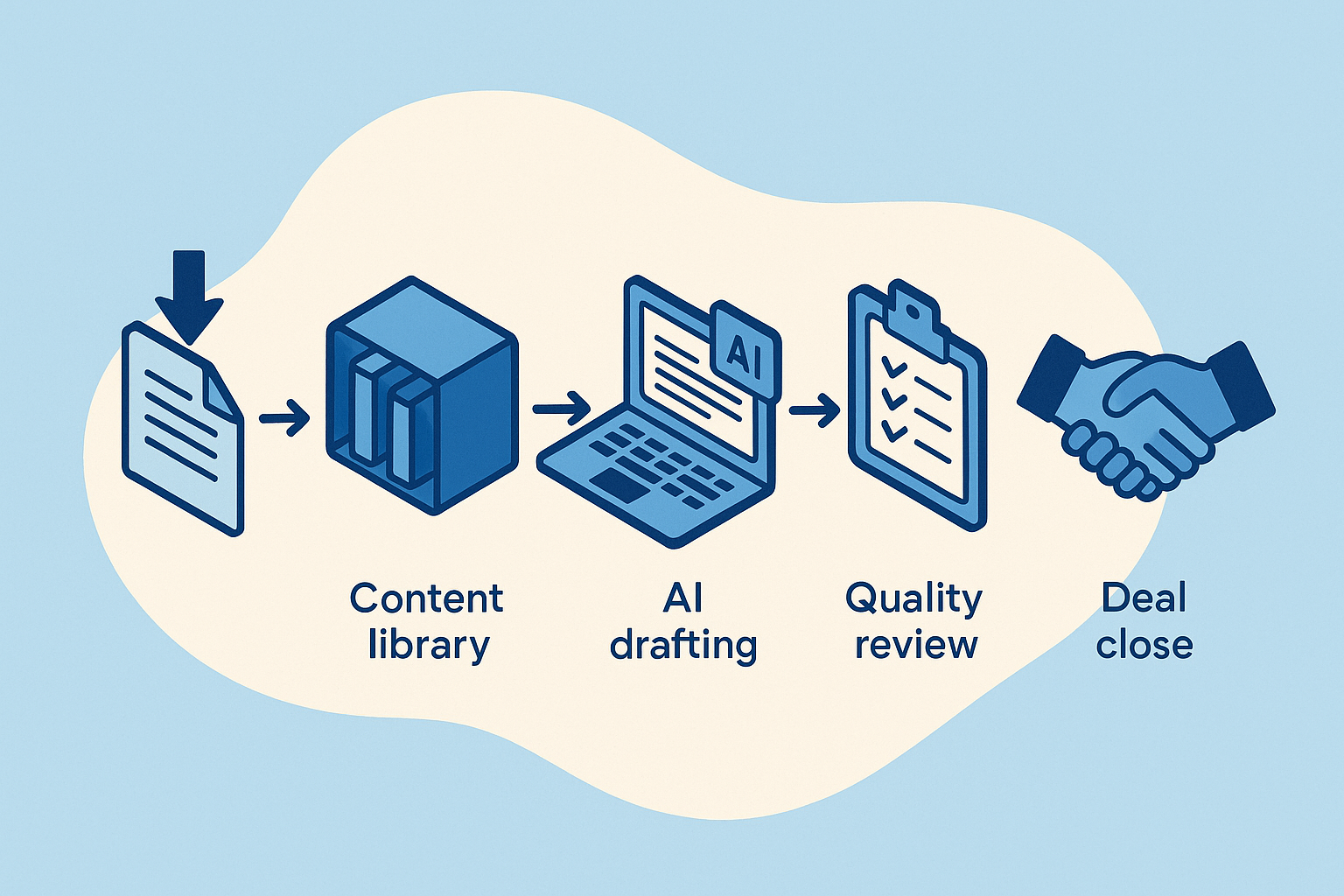

Enterprise AI proposal management covers five stages: intake triage with automated fit scoring and resource estimation, content library governance with version control and freshness alerts, AI-assisted drafting with category-based SME routing, automated compliance checks and quality assurance, and outcome tracking that feeds win/loss data back into the system. Each stage has defined KPIs and handoff criteria so the process scales without creating new bottlenecks.

Teams that implement AI proposal management as a complete system — not just a drafting tool — typically see 60-80% reductions in response cycle time, 15-25% improvements in bid-to-win ratios from better triage decisions, and progressively higher first-draft accuracy as the outcome learning engine compounds across cycles. The ROI is strongest for teams handling 20+ proposals per quarter where the volume justifies the system investment and generates enough outcome data for the learning loop to compound quickly.

Generic AI writing tools generate text from broad training data without source grounding, audit trails, or compliance workflows. Enterprise proposal management platforms retrieve answers from your approved documentation, maintain immutable records of every draft and edit for regulatory defensibility, route questions to specialized reviewers based on category and confidence level, and learn from reviewer feedback to improve over time. The difference matters most in regulated industries where audit trails and answer accuracy have compliance implications.

Enterprise platforms integrate with CRM systems like Salesforce to pull prospect data at intake and push proposal outcomes back for pipeline tracking. They connect to document management systems like SharePoint and Confluence for automatic knowledge base updates, support SSO authentication with role-based access controls, and synchronize win/loss outcomes for closed-loop reporting. The integration goal is to make proposals a measured stage in your sales process rather than a side activity that happens outside the CRM.